INSUBCONTINENT EXCLUSIVE:

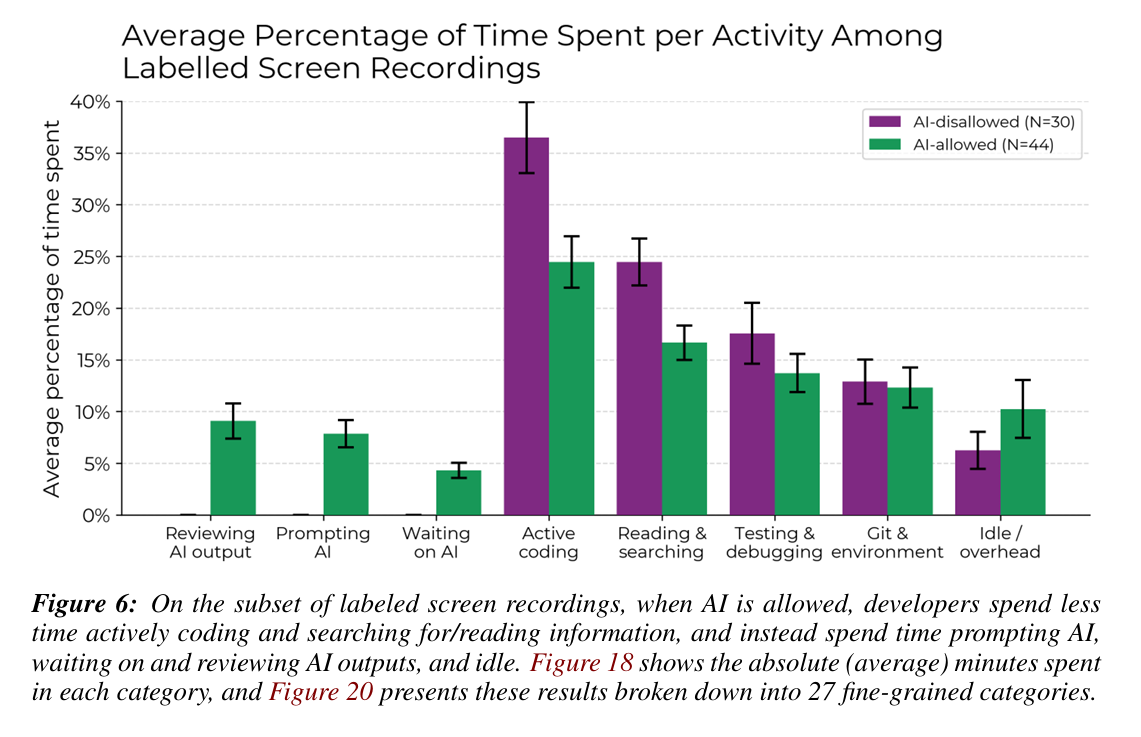

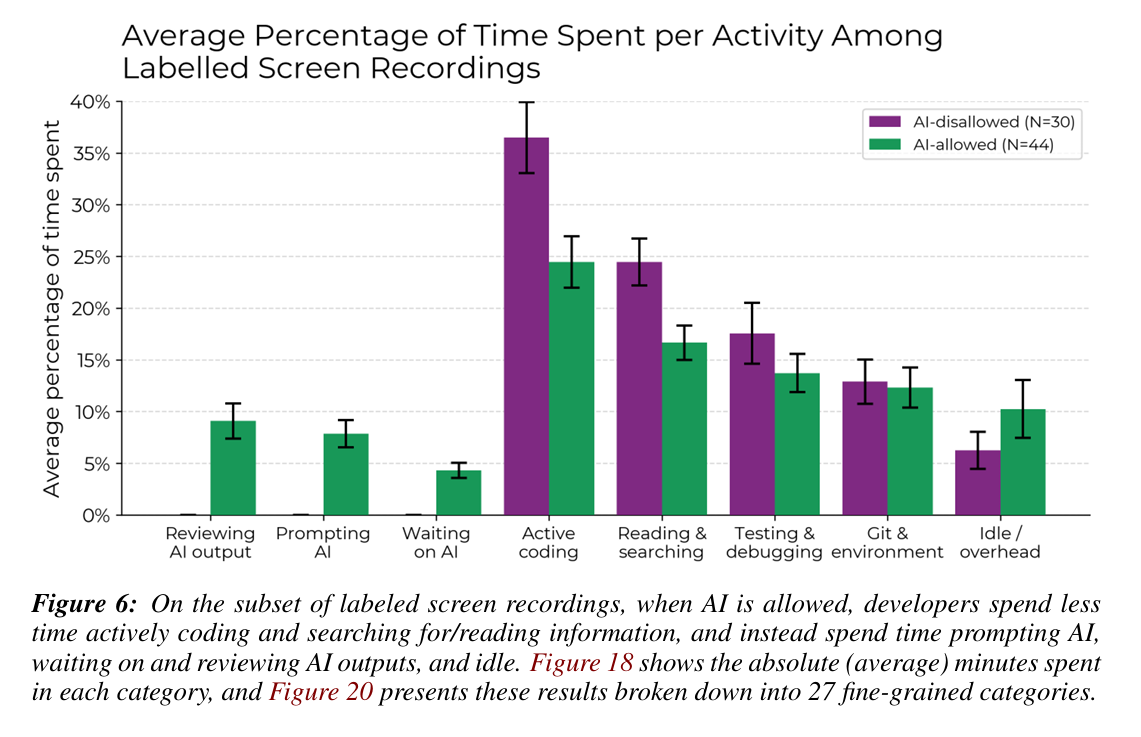

Time saved on things like active coding was overwhelmed by the time needed to prompt, wait on, and review AI outputs in the

study.

Time saved on things like active coding was overwhelmed by the time

needed to prompt, wait on, and review AI outputs in the study.

Credit:

METR

On the surface, METR's results seem to contradict other benchmarks and experiments that demonstrate increases

in coding efficiency when AI tools are used

But those often also measure productivity in terms of total lines of code or the number of discrete tasks/code commits/pull requests

completed, all of which can be poor proxies for actual coding efficiency.Many of the existing coding benchmarks also focus on synthetic,

algorithmically scorable tasks created specifically for the benchmark test, making it hard to compare those results to those focused on work

with pre-existing, real-world code bases

Along those lines, the developers in METR's study reported in surveys that the overall complexity of the repos they work with (which average

10 years of age and over 1 million lines of code) limited how helpful the AI could be

The AI wasn't able to utilize "important tacit knowledge or context" about the codebase, the researchers note, while the "high developer

familiarity with [the] repositories" aided their very human coding efficiency in these tasks.These factors lead the researchers to conclude

that current AI coding tools may be particularly ill-suited to "settings with very high quality standards, or with many implicit

requirements (e.g., relating to documentation, testing coverage, or linting/formatting) that take humans substantial time to learn." While

those factors may not apply in "many realistic, economically relevant settings" involving simpler code bases, they could limit the impact of

AI tools in this study and similar real-world situations.And even for complex coding projects like the ones studied, the researchers are

also optimistic that further refinement of AI tools could lead to future efficiency gains for programmers

Systems that have better reliability, lower latency, or more relevant outputs (via techniques such as prompt scaffolding or fine-tuning)

"could speed up developers in our setting," the researchers write

Already, they say there is "preliminary evidence" that the recent release of Claude 3.7 "can often correctly implement the core

functionality of issues on several repositories that are included in our study."For now, however, METR's study provides some strong evidence

scenarios.4ef326c6cf100a89229dc8dadcba48e9